Trippr audio programming: The callback (part 2)

December 9, 2018 - Jos van Tol

So last time we discussed the basics of digital audio. And concluded with a simple command line tool that exported WAV files with audio. But what if we wanted to listen to audio at real-time. Plus, what is we wanted to change the parameters on the fly?

In that case we will have to talk more directly with our sound card. It depends a whole lot on your hardware how this works. Thankfully we have our bloated and rusty operating system that can help us with that. We have API's to help us. These application programming interfaces help us talk to the hardware. On Windows that is XAudio, macOS has Core Audio, and Linux has problems.

In this particular case I used a cross-platform interface on top of these, called the Simple Directmedia Layer. SDL is fairly low-level, small, and super portable. So you're welcome to follow along on whatever system if you'd like to.

We need an audio buffer.

I've talked about sample rate and bit depth. Now we'll add buffers. In fact, an audio buffer is a very small audio snippet in memory. But not too small. And not too large... You'll see.

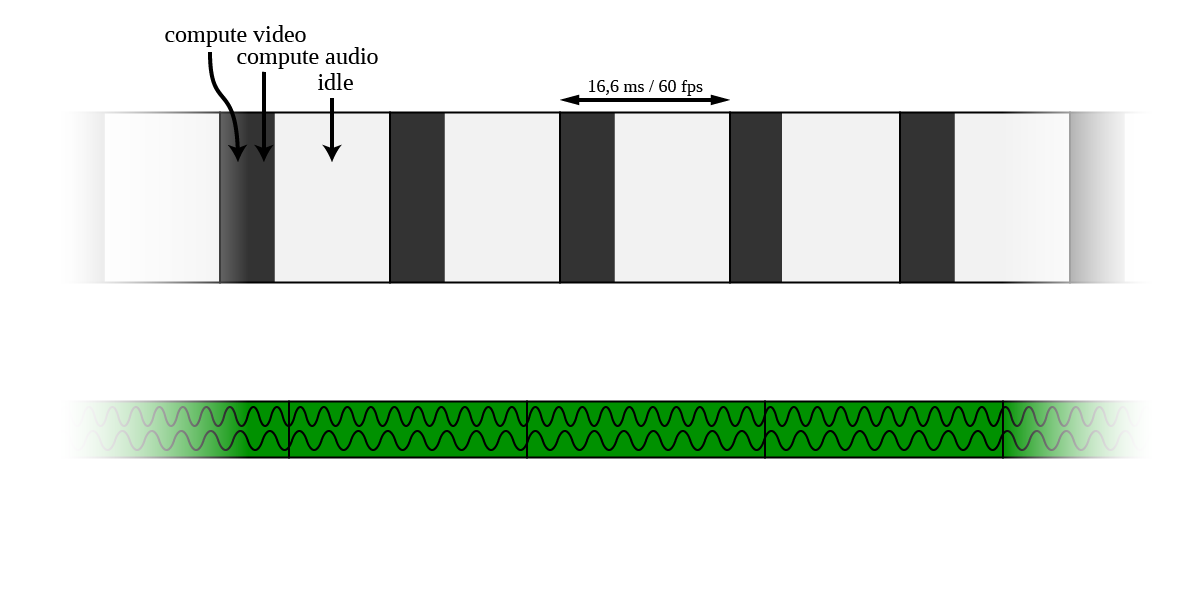

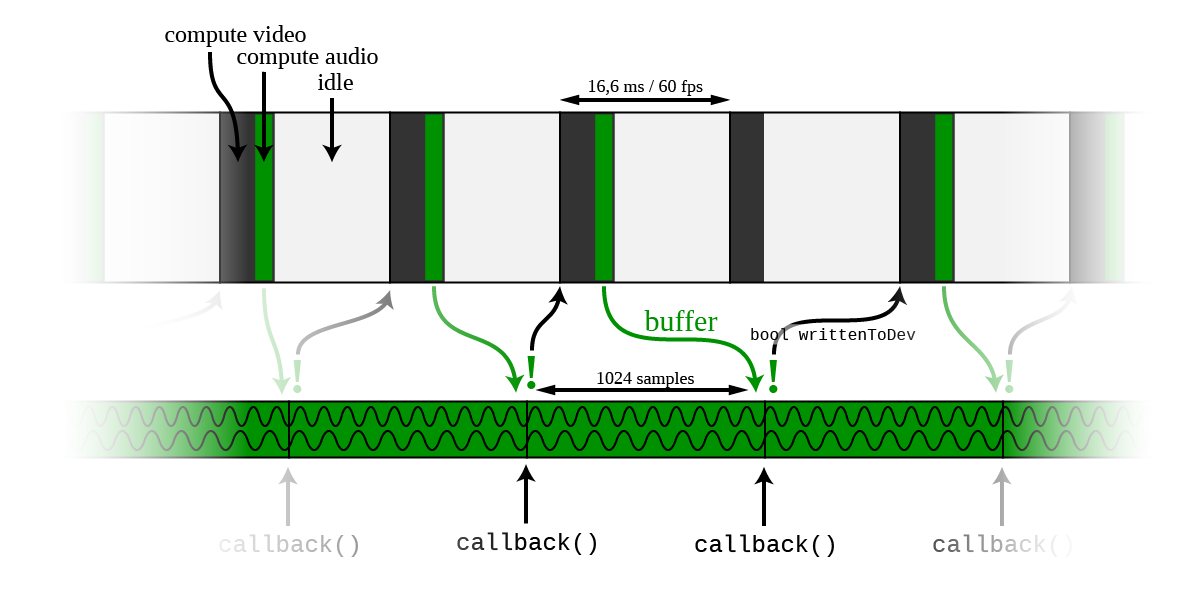

The engine behind Trippr runs at 60 frames per second. There are a few reasons for that. The refresh rates on most modern computer screens are at that speed. Most of the time the computer's calculations for the next frame will be done quicker than the 16.6 milliseconds (1/60th second) needed per frame. But it's still clever to wait for the rest of the time. To stay in sync with the refresh rate mentioned before. But also to safe battery life on a mobile device. The CPU will be sleeping for most of the time! Remember that even one millisecond is a long time for a computer.

One extra reason is that we have some time to play our sound samples. We can calculate new samples for the buffer every frame. Every 16 milliseconds. We know that our audio holds 44,100 samples per second. So we need 44100 / 60 = 735 samples per frame. So the audio buffer has to hold at least that amount (per channel) to have enough data to send to the audio device before it time again to calculate new samples.

Setting up the buffer with SDL.

We set up this system by opening a so called audio device with SDL. We call SDL_OpenAudioDevice() for this at the initialisation of our program. This takes the info we talked about: the sampling rate (44100), the bit depth (16), the amount of channels (2) and the buffer size. The buffer likes to be a power of two. So this has to be 1024. It's the smallest power of two that's larger than the 735 we decided to needed. Also this gives us a bit of room when for some reason we don't make it to the next frame within the 16.6 ms. (For example when the CPU is very busy for a moment)

AudioSpec.freq = 44100;

AudioSpec.format = AUDIO_S16;

AudioSpec.channels = 2;

AudioSpec.samples = 1024;

AudioSpec.callback = SDL_AudioCallback;

AudioSpec.userdata = (void *)Buffer;

In this case the amount of samples in the buffer are actually "sample frames". A sample frame is channel inclusive. So let's say there are 1024 stereo samples in the buffer. (Or double that in interlaced samples) The buffer size is 1024 x 2 bytes x 2 channels = 4096 bytes.

Why not make the buffer huge?! We will never be out of data right? Well, when the user changes the parameters in the user interface. It will take the time of the buffer before new sound can be calculated. So the user experience a lag before the changes he/she made are heard back.

Why not make the buffer super tiny?! We will always have a super quick and responsive user experience! Well, anytime this tiny buffer runs out of data before new data is written to it the audio device doesn't know what to do, and I can promise you it will sound horrible.

The callback function.

So how do we get those samples we carefully calculated to the device? A way to do this is with the SDL_AudioCallback() function. This is a function from SDL that we have to write ourselves. It gets called every time the audio stream to the device needs new data. So we need to implement this function by getting our sample buffer to the audio stream as quickly as possible. We'll use a memcpy() for this.

void SDL_AudioCallback(void *Buffer,

uint8_t *Stream,

int32_t SizeInBytes)

{

// Straight up copy buffer to audio device.

memcpy(Stream, Buffer, SizeInBytes);

writtenToDev = 1;

return;

}

The pointer "Buffer" is our audio data. We copy that to the "Stream" pointer, where SDL expects the new data to be. When this happened we set a boolean flag writtenToDev so the next time a new frame is set up I know it's time the calculate a new buffer. And this loops on and on:

- Calculate 1024 samples into our local audio buffer.

- Wait until SDL_AudioCallback gets called.

- Send the buffer to the audio device.

- Set up our writtenToDev flag.

- New frame: Calculate 1024 samples into our local buffer.

- Wait until SDL_AudioCallback gets called.

- ...

But how do we fill our local buffer? What are these (16-bit) values we will send to the audio card? What makes the sound? Well, that's the topic of part 3...